Content test results

Sitecore sends an email to content authors when a test that they have started is over. The email contains a link that you can click to see test results. You can also navigate to the page in the Experience Editor and open the test results from the Optimization tab.

A red dot on the tab indicates that there is an active test on the page. The ribbon of the Optimization tab looks like this:

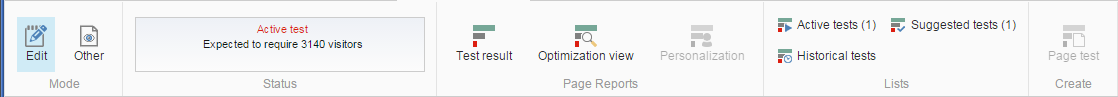

If there is an active test, the Status shows how many visitors are required for the test to finish. In this example, the status shows that it will take another 3140 visitors to finish the test. If there is enough historical data, the status instead shows the number of days it will take for the test to finish.

This topic describes:

Test winners

Test winners

The user who has created a test can select an experience and click Pick as winner to end the test and declare the experience as the winner of the test. This overrules the test objective defined for the test. Otherwise, Sitecore finds a winner.

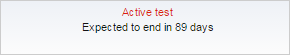

When a test winner has been found, the Test summary window is displayed.

As part of the results, you can see:

-

Other pages that you can optimize and test

-

Predictions of specific visitor segments that prefer a different experience than the test winner.

In this example, the test has identified that visitors arriving during workdays prefer the original experience instead of the winner. You may want to create specific personalization rules that target these visitors, and then run a test with the new rules.

Test results

Test results

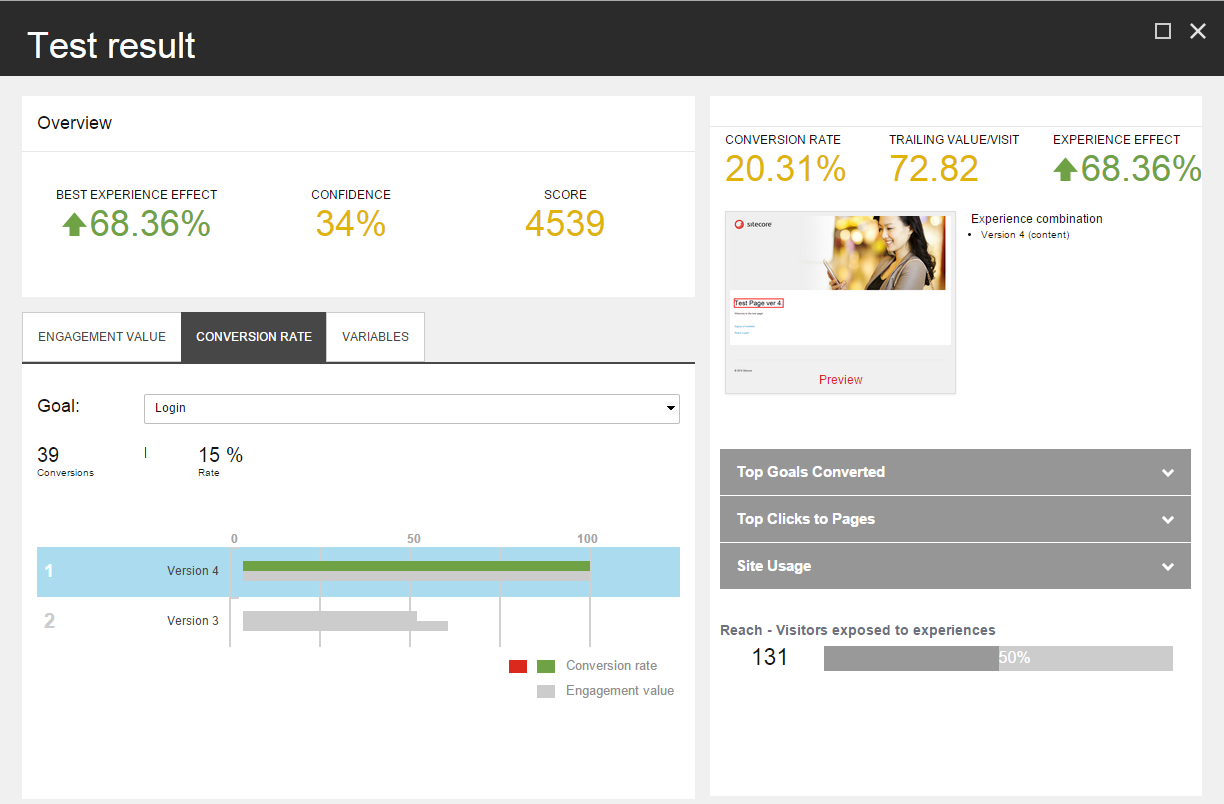

You can click the Test result button in the Optimization tab to see the current test result report for the page.

The Overview shows:

-

Best experience effect – the performance of the best performing experience, calculated as the difference in percent between the trailing value per visit of the original and the best experience in the test.

-

Confidence level – the statistical confidence level that the test has reached. If there are fewer than 100 visits, the confidence level is 0.

-

Score – the test score assigned to the creator of the test, based on the engagement value gained and the importance of the tested page.

The Engagement Value, Conversion Rate, and Variables tabs show bar charts with the tested experiences. When you select one of the experiences, the Details panel shows details about this experience.

Below the Details panel there are three expandable sections with more information about the currently selected experience.

Engagement value

Engagement value

This tab contains a graph that lists the experiences in the test in order of their performance, as measured in terms of engagement value. The best performing experience is assigned a value of 100, and the bars in the chart indicate the relative performance of the remaining experiences. The bar is green for experiences that perform better than the original experience; the bar is red for experiences that perform worse than the original.

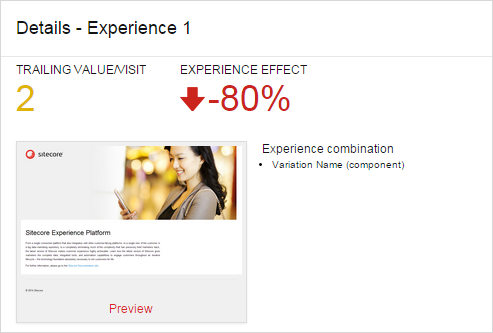

Select an experience to see this information in the Details panel:

There are two general measurements for the selected experience:

-

Trailing value/visit – the total engagement value, counting only page views occurring after the visitors encountered the page being tested, divided by the number of visits exposed to the experience.

-

Experience effect – the total negative, positive, or neutral experience effect. This is the difference in percent between the trailing value/visit of the selected experience and the default experience.

The Details panel also shows a preview of the experience. You can click Preview to edit the selected experience.

Conversion rate

Conversion rate

This tab shows the Conversion rate for all the experiences. Select a goal from the Goal dropdown list to see how many times the goal was triggered. The conversion rate is expressed as a percentage of the visits in which the selected experience was displayed. If there is more than one conversion per visit, the rate is greater than 100%.

The bar chart shows statistics for the experiences in the test.

When you select an experience in the bar chart, the Details panel shows the conversion rate percentages for the selected experience in addition to the trailing value per visit and the experience effect.

Variables

Variables

The Variables tab shows a graph indicating the engagement value of each variable in the test.

The Details panel shows the trailing value per visit and the experience effect.

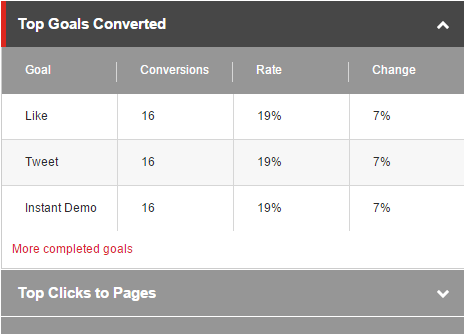

Top goals converted

Top goals converted

Expand the Top Goals Converted section to see the goal conversion results for the selected experience.

The table shows the following metrics:

-

Goal – the goals triggered by visitors exposed to the test

-

Conversions – the number of conversions

-

Rate – the number of goal conversions after viewing the selected experience divided by the number of visits, multiplied by 100

-

Change – the change between the original experience and the selected experience

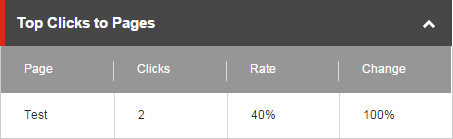

Top clicks to pages

Top clicks to pages

Expand the Top Clicks to Pages section to see the top clicks for the selected experience.

The table shows these metrics:

-

Page – the name of the next page that was clicked in the session

-

Clicks – the total number of clicks to the page

-

Rate – the number of clicks to page after viewing the selected experience divided by the number of visits, multiplied by 100

-

Change – the change between the original experience and the selected experience

Site usage

Site usage

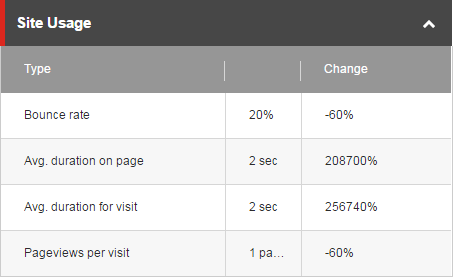

Expand the Site Usage section to see a summary of the site usage for the selected experience.

The table shows the following metrics:

-

Type defines the value in the second column:

-

Bounce rate (percent) – the percentage of visitors who visit this page and then bounce (leave the site) without viewing other pages.

-

Avg. duration on page (number in seconds/minutes) – the average duration that visitors stay on the page

-

Avg. duration for visit (number in seconds/minutes) – the average duration that visitors stay on the site

-

Pageviews per visit (number of pageviews) – the average number of different pages that visitors look at in one visit

-

-

Change shows the change of this measurement compared to the original.

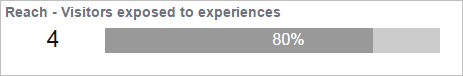

Reach

This shows the number and the percentage of visitors exposed to this experience.

As described above, some results are calculated based on number of visits to the page being tested and this number can differ from the number of visitors, since a visitor can have multiple visits to the same page. The number of visits is not shown in the Test Result dialog.